The disclosure landscape

YouTube’s approach to AI-generated content has been shifting steadily, and if you’re producing video content for brands or your own channels, you need to pay attention.

The platform now requires creators to disclose when content includes realistic-looking material made with AI. That includes synthetic voices, digitally altered faces, and AI-generated footage of real events or places. The labels show up in the video description area - and for sensitive topics, directly on the video player itself.

This isn’t optional. Failing to disclose can result in content removal and, for repeat offenders, channel penalties.

What actually requires disclosure

This is where it gets nuanced, and where I’ve seen a lot of confusion with clients.

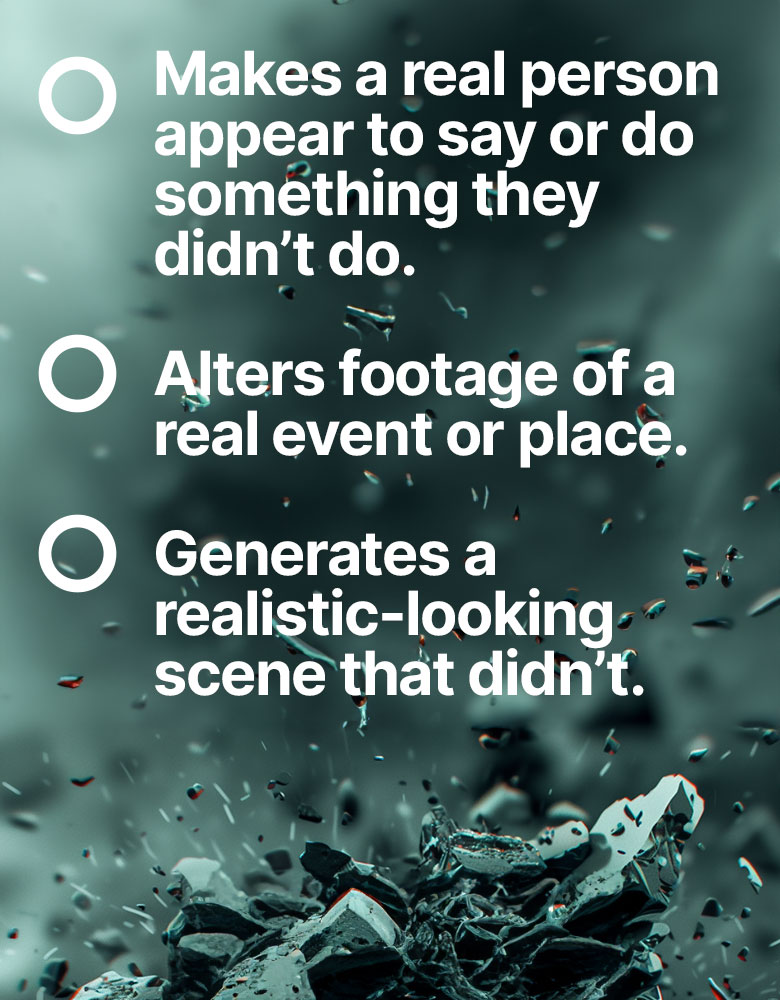

You need to disclose when:

- AI generates realistic footage that could be mistaken for real video

- You use synthetic voices that sound like real people

- AI alters real footage to change what happened or what was said

- You create realistic depictions of real events that didn’t actually occur

You don’t need to disclose when:

- AI assists with scripting or ideation

- You use AI for colour grading, lighting adjustment, or standard post-production

- AI generates obviously fictional or animated content

- You use AI tools for captions, translations, or accessibility features

The distinction YouTube is drawing is between AI as a production tool and AI as a content generator. Using Premiere’s AI-powered audio cleanup? Not disclosure territory. Using an AI voice clone of a public figure? Absolutely disclosure territory.

Does it affect reach?

This is the question everyone asks. And honestly, the answer so far is: not really.

I’ve been watching the data across several client channels since these rules came in. Videos with AI disclosure labels aren’t seeing measurably different performance from those without. Click-through rates, watch time, and subscriber conversion all look normal.

YouTube has stated explicitly that the disclosure label isn’t a ranking signal. The algorithm doesn’t penalise you for being transparent. If anything, the opposite risk is bigger - getting flagged for non-disclosure could genuinely hurt your channel.

That said, audience perception matters. And this is where it gets interesting.

The audience trust equation

I’ve noticed a generational split in how audiences respond to AI disclosure. Younger viewers - the digital-native cohort - largely don’t care. They’ve grown up with filters, synthetic media, and AI tools. An AI label on a video is about as noteworthy as a “shot on iPhone” credit.

Older audiences and B2B viewers tend to be more cautious. In sectors like finance, healthcare, or education, an AI disclosure label can trigger questions about accuracy and trustworthiness. Not because the content is worse - but because the association between “AI” and “unreliable” hasn’t fully faded yet.

For brands, this means thinking about your specific audience before deciding how prominently to feature AI in your production process. Disclosure is mandatory where required. How you frame it in your broader content strategy is a choice.

What this means for production strategy

Here’s my practical advice for brands and agencies navigating this.

Be proactive about disclosure. Don’t wait to be caught. If your content uses AI in a way that meets YouTube’s criteria, label it. The reputational cost of being seen to hide AI usage far outweighs any perceived benefit of staying quiet.

Build AI disclosure into your production workflow. Don’t treat it as an afterthought. When you’re planning a video, note which elements involve AI generation and flag them for disclosure at the upload stage. Make it part of the process, not a last-minute panic.

Focus on quality, not origin. The best defence against any potential AI stigma is making content that’s genuinely good. Audiences forgive a lot when the content is valuable, entertaining, or informative. They don’t forgive lazy, generic output - whether it’s AI-generated or not.

Watch the regulatory direction. YouTube’s rules are a preview of where broader regulation is heading. The EU AI Act already has transparency requirements for synthetic media. The UK is developing its own framework. What YouTube requires today, legislation will likely require tomorrow.

The bigger picture

What I find most interesting about YouTube’s approach is that it’s essentially creating a transparency standard for the entire content industry. When the world’s largest video platform sets rules about AI disclosure, those rules ripple outward.

Creators who build good habits now - transparent disclosure, quality-first production, clear audience communication - will be in a strong position as regulation catches up. Those who try to hide AI usage or game the system are storing up problems.

My advice? Treat disclosure as a feature, not a bug. Be upfront about how you use AI. Focus your energy on making the content brilliant. The label on the tin matters less than what’s inside it.

Where this is heading

YouTube’s rules will keep evolving. They’ve already signalled that synthetic music, AI-generated thumbnails, and AI-assisted editing could come under the disclosure umbrella in future. The finish line keeps moving.

The smart play is to stay ahead of it. Build workflows that track AI usage across your production pipeline. Create internal guidelines that go slightly beyond the current requirements. That way, when the rules tighten - and they will - you’re already compliant.

Don’t fear the label. Fear being behind the curve.