Your new marketing exec carries more computational potential in their pocket than NASA had when they landed on the moon. Yet they still struggle to recall the process you taught them last week.

This isn’t a failure of training. It’s a misunderstanding of what training is for.

We’ve been here before. In 1975, the National Advisory Committee on Mathematical Education recommended that students from eighth grade upward should have access to calculators for all classwork and exams. The debates were fierce: would children lose the ability to compute? Would we create a generation dependent on machines for basic arithmetic?

It took nearly twenty years for calculators to become standard in UK exams. The 1982 Cockcroft Report - “Mathematics Counts” - laid out the case clearly: the availability of a calculator in no way reduces the need for mathematical understanding on the part of the person using it. What changed wasn’t whether students learned maths. It was which maths they needed to internalise versus which they could safely offload.

I work with enterprise organisations designing L&D frameworks that should stick, that should change behaviour, that should drive performance. And increasingly, we’re confronting a version of that same question: when infinite information lives in your pocket, what should live in your head?

The learning relationship is shifting

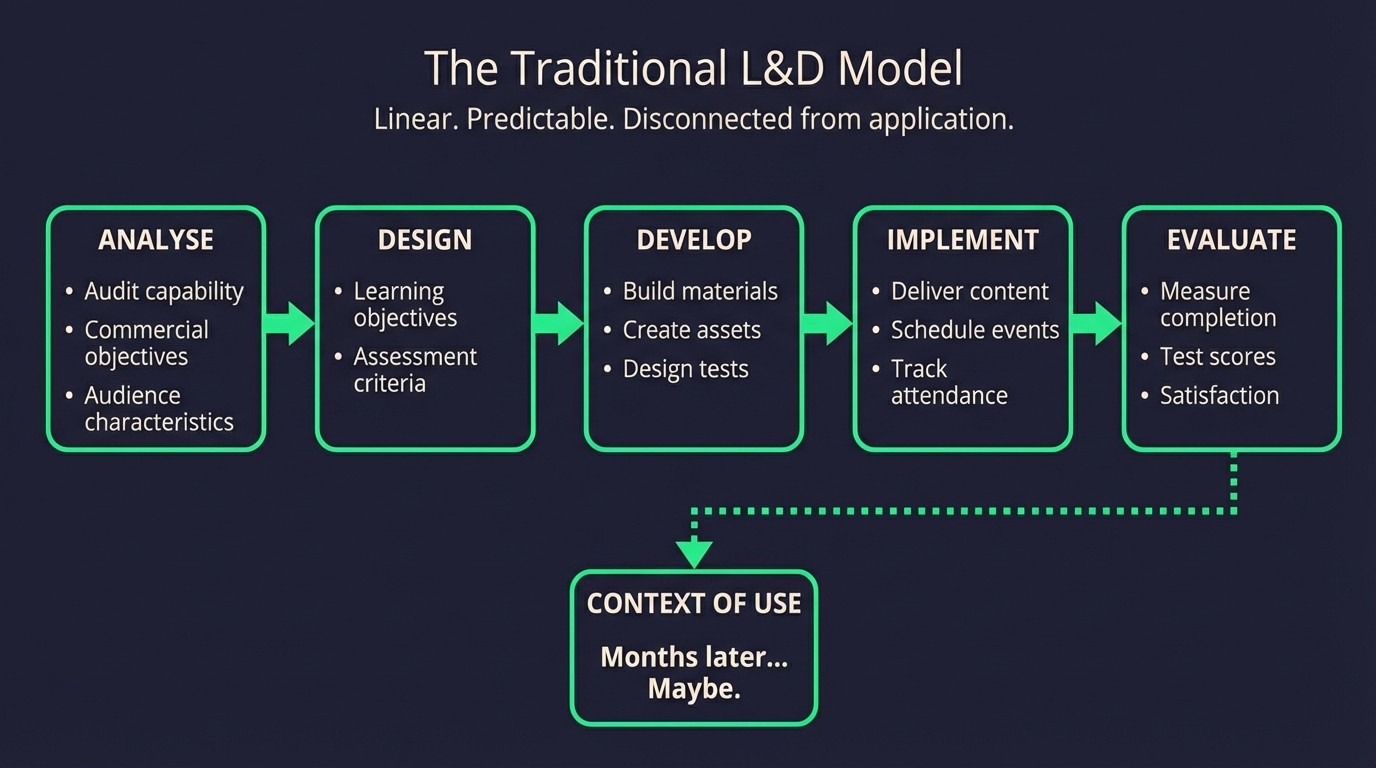

The traditional L&D model assumed humans would process learned content, retain it, then apply it in context. Linear. Predictable. Measurable by completion rates and quiz scores.

But AI is transforming this into something more nuanced. When we design learning experiences now, we’re not just creating content for human consumption. We’re creating modular, contextually-tagged materials that AI can orchestrate in real-time based on individual learning patterns, business contexts, and performance data.

The learner increasingly becomes the audience to an AI that conjures personalised learning experiences where relevant - but also when necessary. Context and compliance, surfaced at the point of need.

The memory architecture problem

Working with clients across sectors, I’ve noticed that the most successful AI implementations begin with understanding what humans should remember versus what AI should handle for them.

But there’s a cautionary tale here. Research on GPS navigation shows what happens when we offload cognitive work without intention. Studies have found that habitual GPS users have worse spatial memory, reduced hippocampal activity, and struggle to form cognitive maps even when navigating without their devices. Unlike calculators - where educators consciously redesigned curricula around the new capability - GPS offloading happened by default. We didn’t decide what to keep. We just stopped doing it.

This is where it gets interesting for enterprise L&D. When AI can provide any procedural knowledge instantly, human memory becomes valuable for different things: contextual pattern recognition, emotional intelligence, and what I’ve started calling “symbiotic capability” - the uniquely human ability to combine AI-provided information with experiential wisdom.

The breakthrough comes when we stop trying to fill human memory with information and start building memory pathways that trigger experiential thought. Couple this with operational validation through fine-tuned AI, and you’re creating something that’s both triggered at the point of need and not based in fact recollection but phased progression.

The manual handling problem

Every enterprise has delivered manual handling training at some point. It’s a liability thing. When accidents occur due to inadequate training, employers face medical expenses and legal action.

But traditional “lift with your legs, not your back” training has terrible retention rates. It’s compliance theatre.

Employees forget it within minutes because they’re memorising procedures rather than building capability.

Employees forget it within minutes because they’re memorising procedures rather than building capability.

The traditional approach that doesn’t work: hour-long presentations about proper lifting technique, employees memorising the “five-step lifting process,” annual refresher courses repeating the same information, compliance tick-boxes that satisfy legal requirements but don’t change behaviour.

Now flip it. Instead of making people memorise lifting procedures:

AI handles point-of-need context - instant access to weight limits, technique reminders, real-time posture feedback through wearables.

Humans learn body awareness patterns (“this feels wrong”), spatial recognition of awkward loads, and instinctive movement habits through guided repetition with immediate feedback.

Workers build muscle memory through practice. They develop confidence through successful pattern recognition rather than procedure memorisation. Context is applied at the point of need, rather than in a classroom three months prior.

It’s a simple example, but the concept applies across any enterprise L&D challenge: instead of filling human memory with information AI can provide instantly, start building the human capabilities that will accelerate discovery or amplify strategic direction.

The hybrid imperative

This isn’t about choosing between human learning and AI orchestration. It’s about designing symbiotic experiences where both contribute their strengths.

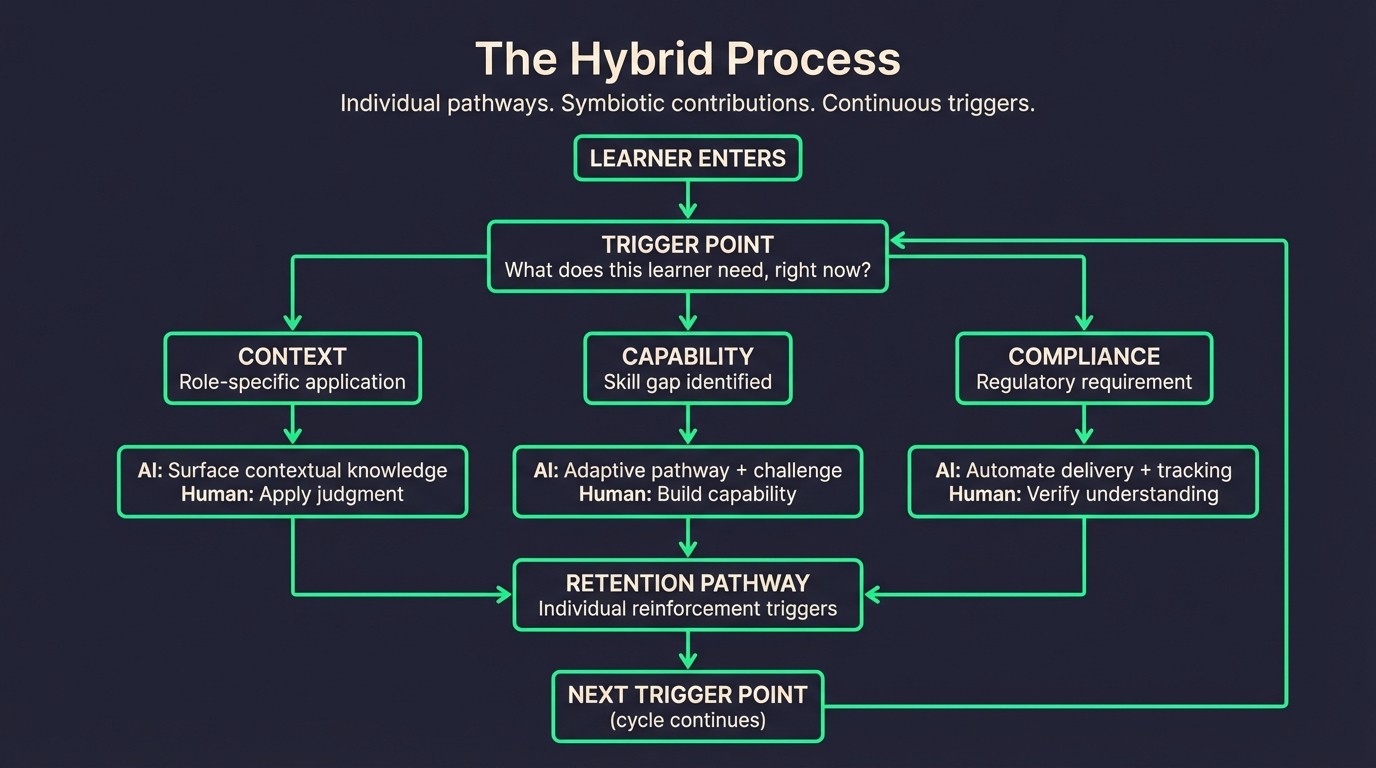

The tricky part is that trigger points in progression will be completely individual to the learner. This could be a context, capability, or compliance requirement. Therefore, this is where the true power of “hybrid” co-intelligence comes in.

Humans are driving the train, but we’re able to explore more of the world faster. Find ways of integrating truly useful knowledge. Experiment with failed opportunities. Refine potential successes more quickly.

The verification challenge

Here’s where it gets stickier. One of the sharpest insights from researchers studying AI and cognition is that as AI capabilities grow, so does opacity. We need these tools most for the tasks where we’re least able to verify them.

In production terms, that’s quality management, creative oversight, and process control stages. In L&D terms, it means shifting from measuring seat time and completion rates to evaluating learning effectiveness and application quality.

That’s what we’re still figuring out. In enterprise models, how do we benchmark L&D progression and retention when the relationship between learning and performance is being fundamentally restructured?

Working with enterprise clients often means working within technology anxiety. Resistance isn’t really about the technology - it’s about the loss of control and understanding. The organisations that will thrive are those that build human capabilities that enhance rather than compete with AI.

The calculator story ended well because educators asked the right question: not “should we allow this?” but “what should humans learn when machines handle arithmetic?” We’re at that same inflection point with AI. The question isn’t whether to use AI in L&D. It’s what we’re designing human memory to actually do.

This is the first in a series exploring how AI is reshaping enterprise learning. Next: why the rush to summarise might be costing us more than we realise.