There’s a pattern emerging in enterprise L&D conversations that I’ve been sitting with for a while now. It goes something like this: we’ve got too much content, learners don’t have time, so let’s use AI to summarise everything and surface the right information at the right moment.

It sounds like progress. It might be a problem.

In the previous piece, I wrote about calculators - how educators spent twenty years figuring out what students should memorise versus what machines should handle. They got it right because they asked the question consciously. GPS is the counter-example: we offloaded spatial navigation by default, and research now shows measurable cognitive decline in habitual users.

The question for enterprise L&D is which path we’re on. Are we making conscious decisions about what AI should handle? Or are we sleepwalking into cognitive offloading?

The efficiency trap

Advait Sarkar, a senior researcher at Microsoft leading their Tools for Thought initiative, put it sharply in a recent TED talk: we’re at risk of becoming “professional validators of robots’ opinions.” Knowledge workers who no longer engage with the materials of their craft. Intellectual tourists who visit ideas but don’t inhabit them.

His team surveyed 319 professionals about their AI use and found something that should concern anyone designing learning experiences. Workers reported putting less effort into critical thinking when working with AI than when working manually. And this effect was stronger when they had higher confidence in AI and lower confidence in themselves.

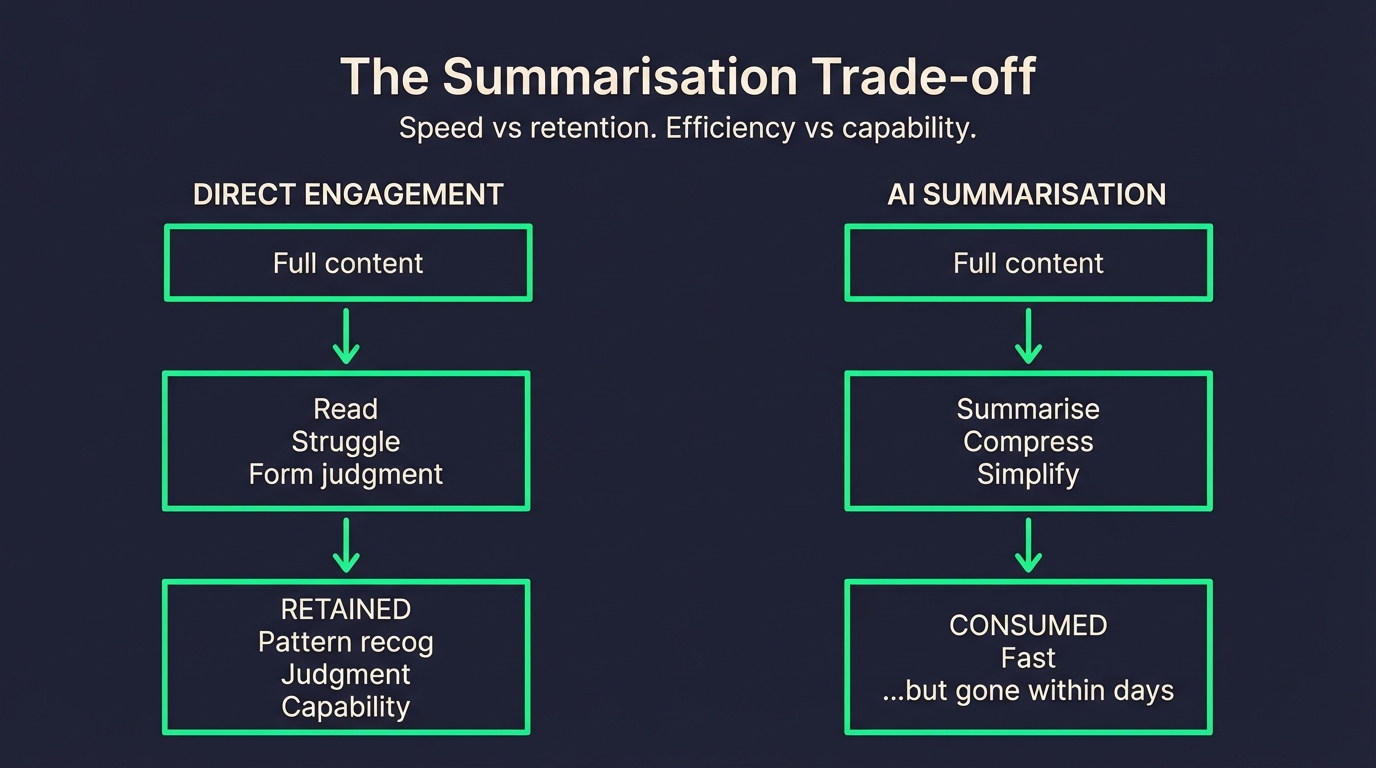

The research gets more uncomfortable from there. On a collective level, knowledge workers using AI assistance produce a smaller range of ideas than groups working manually. People remember less of what they wrote when AI helped them write it. And when they read AI-generated summaries - which is increasingly how learning content gets consumed - they remember less than if they’d read the original document.

The metacognition gap

What’s being lost isn’t just information. It’s what Sarkar calls “metacognition” - the ability to think about your own thinking process.

Working with AI introduces new cognitive demands: reasoning about task goals, decomposing the task, evaluating whether AI is even applicable, assessing the quality of output. These are things that are built into the process of working directly with material. They become problematic when that material engagement becomes intermediated.

To put it bluntly: we’ve become middle managers for our own thoughts.

This has direct implications for how we design enterprise learning. If the goal is behaviour change - and it usually is - then learners need to engage with material, not just receive it. They need to form their own judgments, struggle with ambiguity, and build the pattern recognition that comes from wrestling with complexity.

When AI summarises that struggle away, what exactly are we training?

The measurement problem

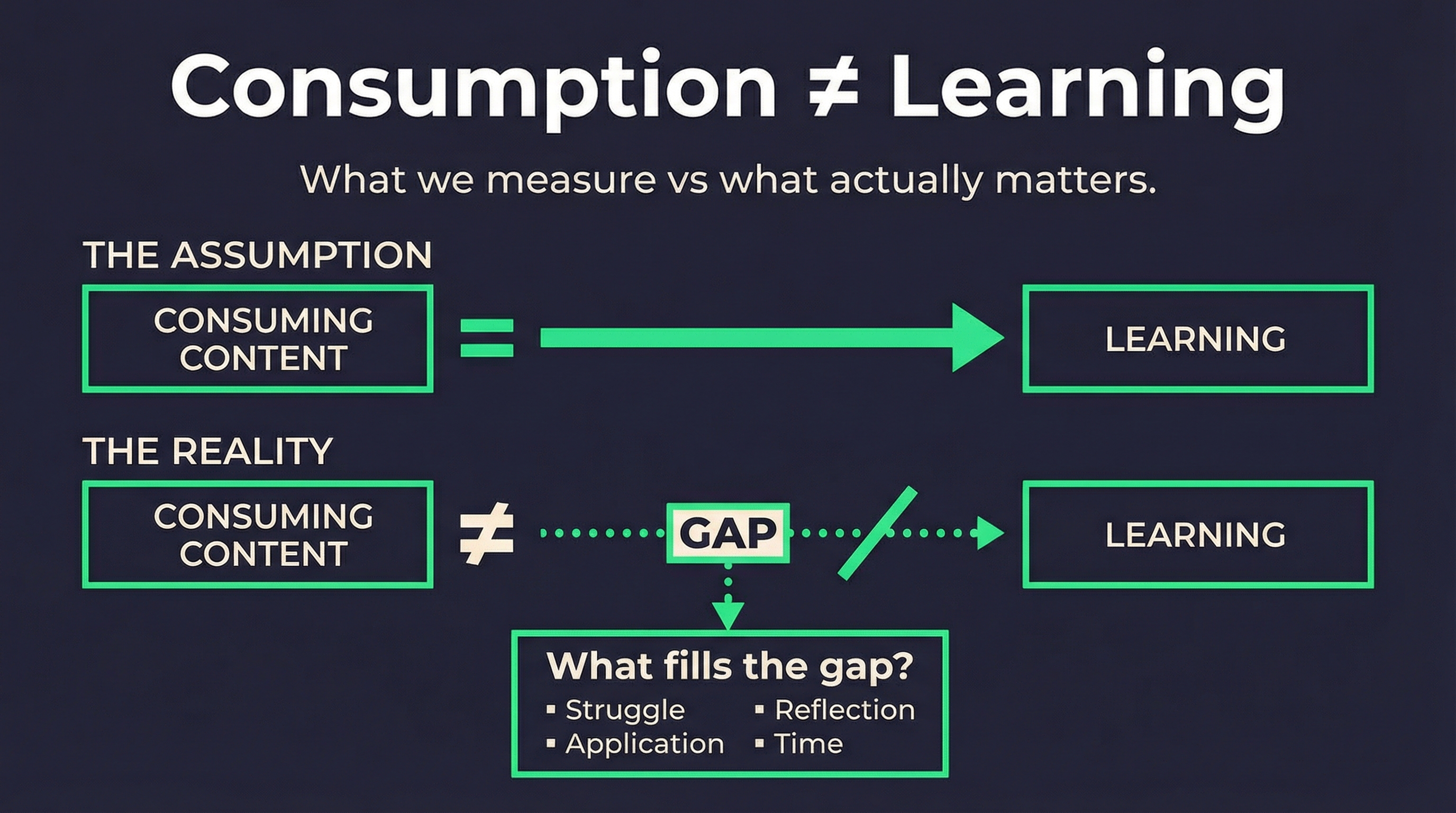

Here’s where this gets practically difficult. Most enterprise L&D measurement is still built around the old model: completion rates, assessment scores, time-to-competency. These metrics assume that consuming content equals learning.

But if the research is right, the relationship between consumption and capability is being fundamentally disrupted. A learner who reads an AI summary might score well on an immediate assessment while retaining almost nothing a week later. A learner who struggled through the original material might score worse initially but build durable capability.

We don’t have good frameworks for measuring this yet. I’ve been exploring what evaluation and retention scoring might look like in AI-augmented delivery structures - how you’d predict and benchmark retention aligned to capability sprints and learning frameworks. It’s early-stage thinking, but it feels like the right question.

The current metrics aren’t just inadequate. They might be actively misleading us about what’s working.

What this means for L&D builds

When I’m working on learning strategy with enterprise clients, I’m increasingly thinking about where human engagement is non-negotiable versus where AI orchestration adds genuine value.

The calculator precedent is useful here. Educators didn’t ban calculators - they redesigned curricula to focus on conceptual understanding, problem formulation, and mathematical reasoning. The arithmetic got offloaded; the thinking didn’t.

It’s not a blanket answer for L&D either. Some content genuinely benefits from AI summarisation and point-of-need delivery. Procedural knowledge, reference material, compliance updates - these can often be surfaced contextually without requiring deep cognitive engagement.

But capability building is different. Strategic thinking, judgment, pattern recognition, complex decision-making - these require what the researchers call “material engagement.” The learner needs to read, to struggle, to form opinions, to be wrong sometimes.

The design challenge is knowing where to place that friction intentionally.

The scaffold versus substitute question

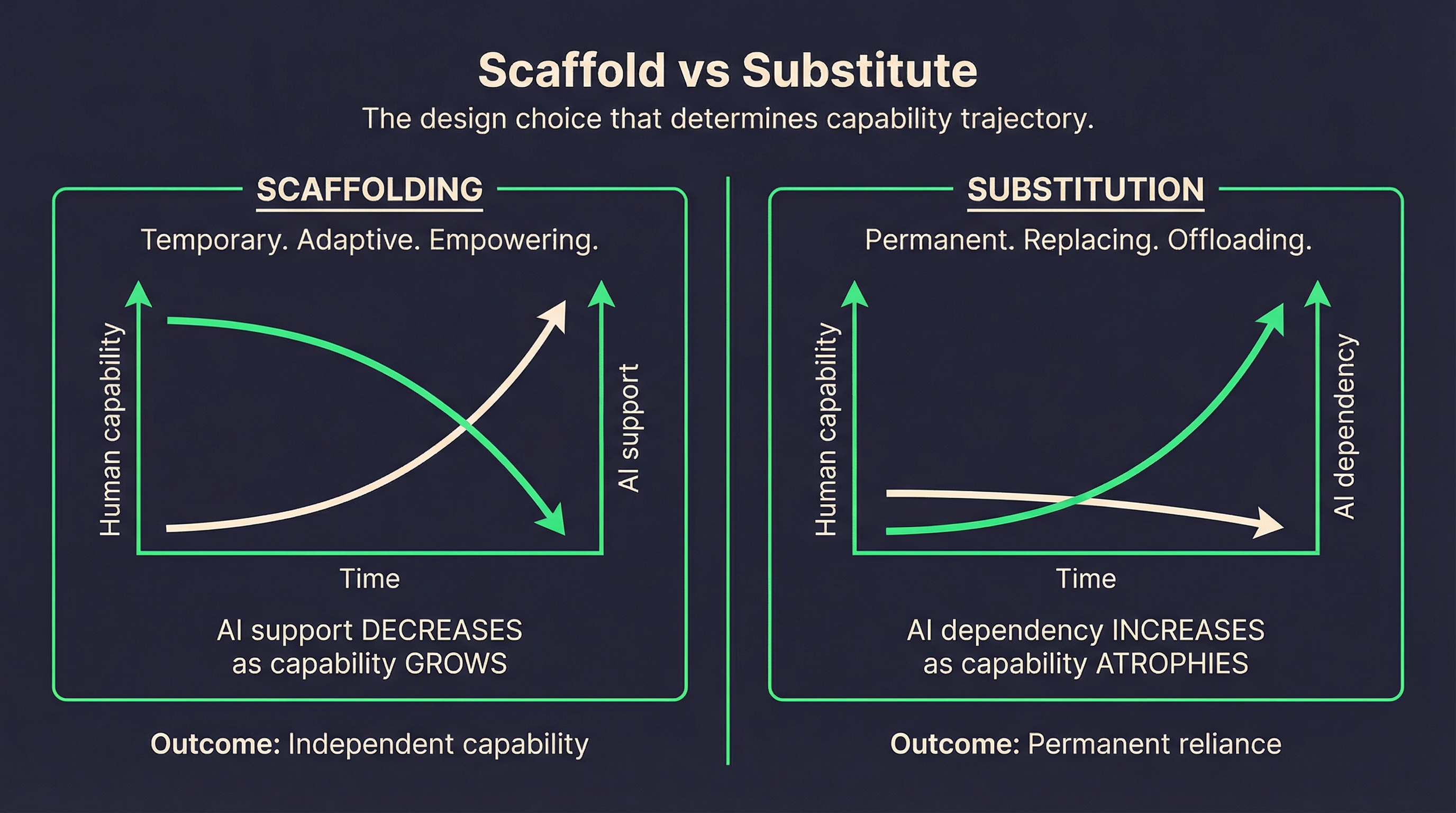

Recent cognitive research draws a useful distinction between AI as scaffold and AI as substitute.

Scaffolding is temporary, adaptive, and empowering. The goal is to strengthen internal capacities so the technology becomes progressively less necessary. A learning system that gradually reduces support as the learner builds capability exemplifies scaffolding.

Substitution is permanent and dependency-creating. The technology assumes responsibility in ways that diminish intrinsic skills. An AI system that learners rely on continuously, without building transferable capability, exemplifies substitution.

The difference depends less on technical sophistication than on design philosophy, integration context, and patterns of use.

Most AI-augmented L&D I see defaults toward substitution without intending to. The efficiency gains are immediate and measurable. The capability costs are delayed and invisible - until they’re not.

The opportunity in the problem

None of this is an argument against AI in enterprise learning. The tools are too powerful and the efficiency gains too real to ignore.

But it is an argument for intentionality. For understanding what we’re optimising for. For recognising that faster delivery and deeper capability might sometimes be in tension, and designing accordingly.

Sarkar’s research suggests that with the right principles of design, you can build tools that are the best of both worlds - applying the speed and flexibility of AI while protecting and enhancing human thought. His team has demonstrated that you can reintroduce critical thinking into AI-assisted workflows, reverse the loss of creativity, and build tools for memory that enable people to work at speed while retaining what they learn.

The principles aren’t complicated: preserve material engagement at strategic points, offer productive resistance rather than just frictionless delivery, and scaffold metacognition so learners are always thinking about their thinking.

The execution is harder. But that’s the work.

This is the second in a series on AI and enterprise learning. Next: a framework for designing learning experiences that protect and enhance thinking.