The short version

If you’ve used AI image generation - Midjourney, DALL-E, Stable Diffusion - you’ve been using diffusion models. They work by starting with random noise and gradually refining it into an image based on your text prompt. It’s an elegant process, and it’s produced remarkable results.

Stable cascading does something different. Instead of building a single image from noise in one pass, it works in multiple stages. Each stage operates at a different resolution, progressively adding detail. Think of it as building a sketch first, then refining it into a painting, then adding fine detail - rather than trying to paint the final piece in one go.

The result? Better compositional accuracy, improved detail control, and - interestingly - lower compute costs for high-quality output.

If you’re commissioning AI-generated imagery for your business, this matters. Here’s why.

How standard diffusion works

Let me give you the non-technical version of standard diffusion, because understanding the baseline makes the comparison clearer.

A diffusion model starts with pure noise - think static on an old television. Over a series of steps, typically 20-50, the model progressively removes noise and shapes the image according to your prompt. Each step refines the image slightly. After all the steps are complete, you get your final output.

The entire process happens at the target resolution. If you want a 1024x1024 image, the model works in that resolution space from start to finish. This means every denoising step is computationally expensive because it’s processing the full image at full resolution.

Standard diffusion models are good. Really good. But they have some known limitations:

- Compositional accuracy - complex scenes with multiple subjects, specific spatial relationships, or detailed text can be hit-and-miss

- Compute intensity - higher resolutions require significantly more processing power

- Detail consistency - fine details like hands, text, and complex patterns can degrade

- Prompt adherence - the more specific your prompt, the more likely the model is to miss or reinterpret elements

How stable cascading works differently

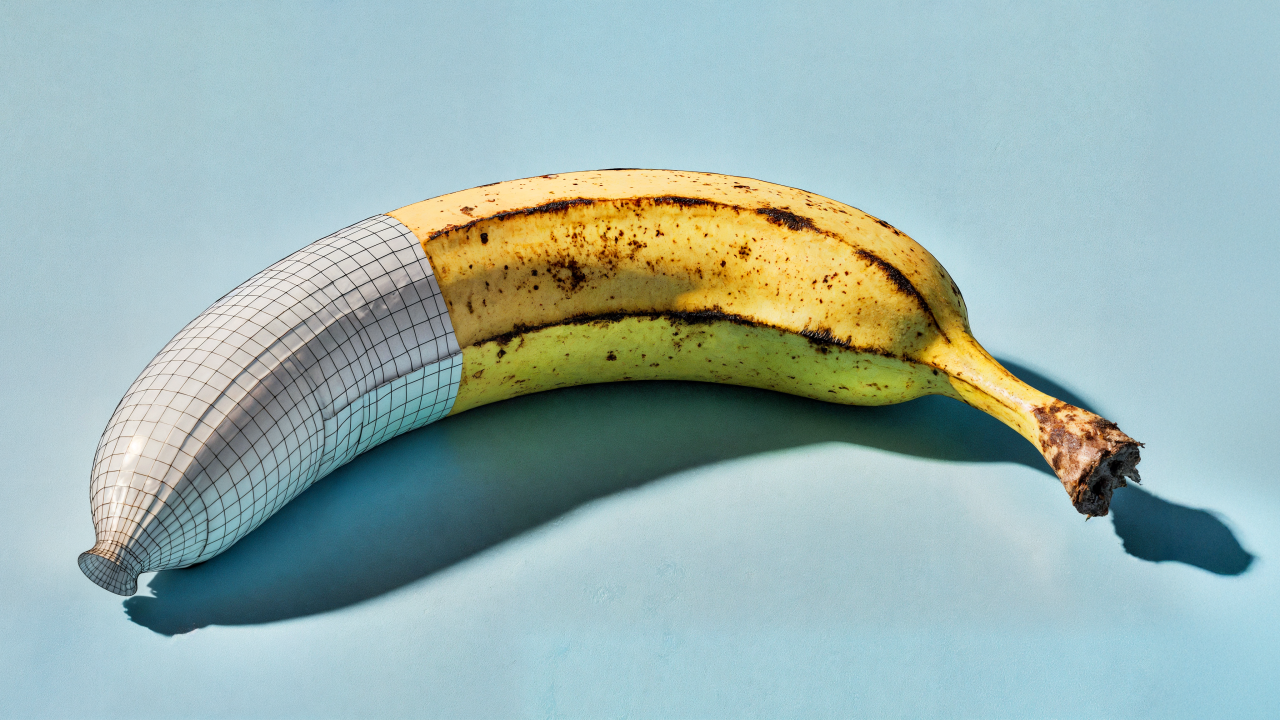

Stable cascading breaks the generation process into distinct stages, each operating at a different resolution.

Stage 1: Latent generation. The model creates a highly compressed representation of the image in a very small latent space. This is where the core composition, structure, and semantic content get established. Think of it as the pencil sketch - rough, small, but capturing the essential structure. Because this operates at very low resolution, it’s computationally cheap.

Stage 2: Latent decoding. The compressed representation gets expanded into a higher-resolution latent image. This stage adds detail, refines spatial relationships, and improves accuracy. The sketch becomes a detailed drawing.

Stage 3: Final decoding. The latent image gets decoded into the final pixel-space output at full resolution. Detail gets sharpened, textures get refined, and the final image emerges.

The key insight is that the heavy creative lifting - composition, subject placement, colour relationships - happens in the cheap, low-resolution early stages. The expensive high-resolution processing only handles detail refinement, where the creative decisions have already been made.

Why this matters for production

If you’re using AI imagery in a business context - for marketing, product visualisation, content production - the practical differences are significant.

Better composition

Because stable cascading separates structural decisions from detail decisions, it tends to produce more compositionally accurate images. If you ask for “a red coffee cup on a wooden table with a window in the background,” a cascading model is more likely to get the spatial relationships right first time.

For production work where you need specific compositions - product shots, scene setups, layout concepts - that’s a meaningful improvement.

Improved text rendering

One of the persistent weaknesses of standard diffusion has been text in images. Signs, labels, packaging - anything with readable text tends to come out garbled. Stable cascading handles this better, though it’s still not perfect. The multi-stage process gives the model more opportunities to refine letterforms.

Lower compute costs at quality

This is the compression economics angle. Because the computationally expensive decisions happen at low resolution, you can generate higher-quality images for less compute cost than equivalent standard diffusion output. If you’re generating at volume - and most production use cases involve volume - that cost difference matters.

More controllable outputs

The staged process means there are more intervention points. You can influence the output at different stages of generation, giving you finer control over the final result. For production work where you need consistency across a series of images, that control is valuable.

What this means if you’re commissioning imagery

If you’re working with a studio or agency that uses AI imagery, or if you’re evaluating tools for your own content production, here’s what I’d take away from this.

Quality is improving because of architecture, not just scale. The conversation about AI image quality has been dominated by model size - bigger models, more training data. Stable cascading shows that smarter architecture can deliver quality gains without just throwing more compute at the problem. That’s good news for efficiency and accessibility.

Ask about the model, not just the output. When evaluating AI imagery providers, ask what generation approach they’re using. Different architectures have different strengths. Cascading models are better for compositional accuracy and detail. Standard diffusion models have broader tool support and more community resources. The right choice depends on your specific use case.

Cost per image is coming down. The efficiency gains from cascading architectures mean the cost of generating high-quality AI imagery is dropping. For businesses that need volume - product variants, social content, localised marketing - this makes AI-generated imagery increasingly viable as a production tool, not just a novelty.

Don’t get locked into one approach. The image generation space is moving fast. Cascading is one architectural innovation among many. Mixture-of-experts, consistency models, and flow-matching approaches are all developing in parallel. Stay flexible, test regularly, and don’t commit your entire production pipeline to a single tool or approach.

My take

I’ve been testing stable cascading models since they became available, and the improvement in compositional accuracy is genuinely noticeable. It’s not a revolution - the images are still recognisably AI-generated in many cases. But the reliability is better. The consistency is better. And the cost efficiency opens up use cases that weren’t practical before.

For most businesses, the practical takeaway is simple: AI image generation is getting better, faster, and cheaper - and not just because the models are bigger. The underlying technology is getting smarter. That’s a trend worth paying attention to.