Your feed isn’t lying to you

You’ve probably seen your LinkedIn feed flooded with perfect character images and videos, great UGC examples, and seamless ‘image edits’ this week. Everything is a “photoshop killer” right? It’s like being back in the early noughties when templates and tutorials came out. Step-by-step made everyone look amazing (at one thing).

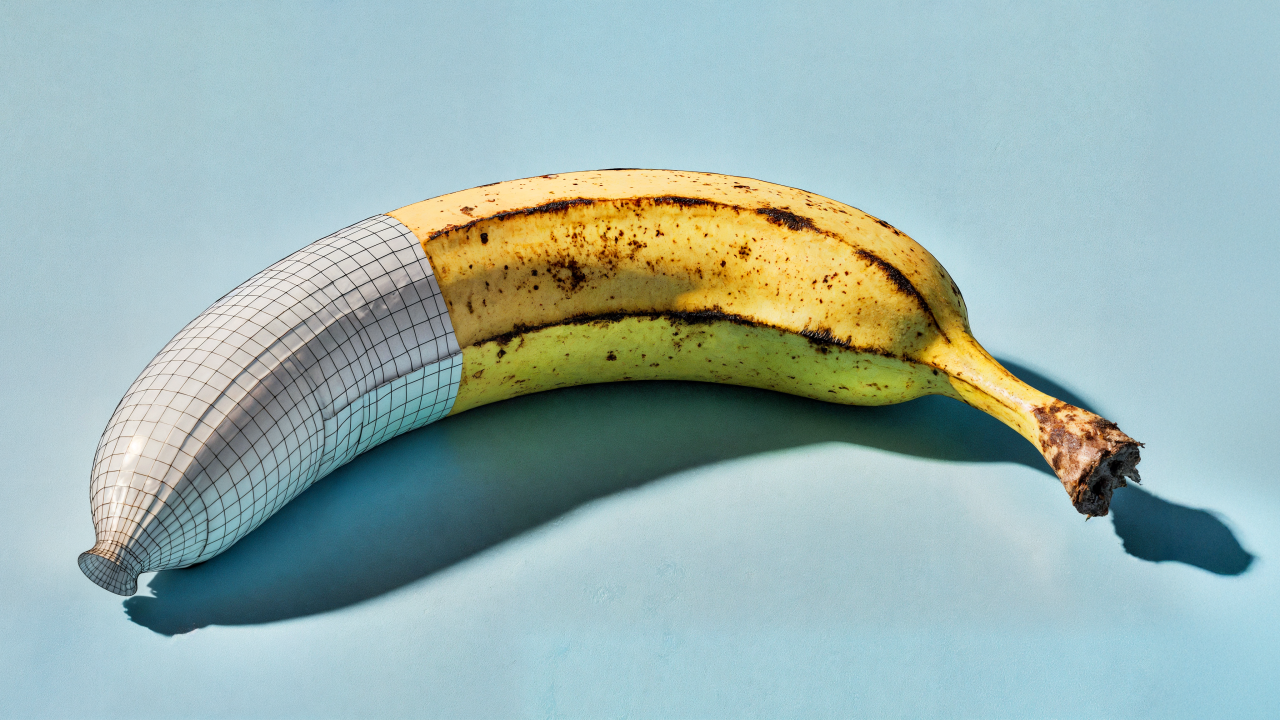

But why this type of content, and why is it so easy with Nano-Banana? What’s the secret sauce behind this spicy new model?

Google’s finally admitted they’re behind “Nano-Banana”, the mysterious AI model that’s been making waves across social media. So it’s about time we talked about why this little yellow beast is causing such a stir in our industry.

What’s got everyone going bananas?

The posts flooding your feed aren’t just lucky accidents. Nano-Banana’s built on the same visual autoregressive architecture that I discussed in my piece about GPT-4o’s viral image sequences. But Google’s Flash 2.5 MMDiT has taken it several steps further.

Autoregressive models work fundamentally differently from simple diffusion transformer models - they predict pixels sequentially, just like how language models predict words. This sequential approach means it naturally maintains consistency between images, which is EXACTLY why we’re seeing such incredible character consistency with Nano-Banana.

While everyone else is still wrestling with controlling typical diffusion models (that treat each image like a blank canvas), Nano-Banana’s autoregressive architecture delivers character consistency that’s hitting over 90% accuracy across LMarena and artificialanalysis qualitative benchmarks. That’s amazing when you think about how much time and money folks are spending on diffusion options, trying to achieve that level of consistency.

So are “diffusion only” models dead?

Well no, but the days of pure diffusion transformer models are coming to an end. We’re using a hybrid mix of model weights to achieve a balanced mix of quality control vs speed vs scalable inference costs.

Don’t get me wrong. These innovation leaps are not time wasted - adopting new model inferences is relatively easy (which is why you’re seeing almost all platform-based services growing together). It’s just another symptom of the rapid changes we’re seeing to content production and generation that NEEDS to be built on adaptable foundations.

Why agencies should pay attention

This isn’t just another shiny AI toy. The autoregressive architecture shift represents a fundamental change in how we can approach visual asset creation - and importantly, control.

Remember when brands like Coca-Cola struggled with character consistency in their early GenAI campaigns? Those days of expensive workarounds, IP adaptors, and control nets might be behind us. When you can maintain brand character integrity across multiple parameters and variations with simple text prompts or directional control, the entire economics of campaign creation changes.

We are banking on a ‘hybrid approach’ - which means we’re getting the best of both worlds. The consistency and speed of autoregressive models with the quality control of diffusion systems. For marketing teams constantly battling tight deadlines and tighter budgets, this is truly game-changing.

The democratisation continues

What’s fascinating is how this follows the exact pattern we saw with GPT-4o’s image sequences. The viral posts aren’t just showing off - they’re stress-testing capabilities that’ll soon become standard workflow tools.

Think about it: if nano-creators and micro-influencers can now generate professional-quality, character-consistent content in seconds, the barrier to entry for high-quality visual content just got demolished. And the expectations across the landscape need to adapt to accommodate this.

In traditional marketing terms, this is like watching the “share of voice” landscape get completely reshuffled. When the lowest common denominator suddenly has access to enterprise-level visual consistency, the baseline expectation for content quality isn’t just shifting - it’s being dragged around by creativity, trends, and technology.

It’s the classic challenge marketers face when new technology enters the market: if you don’t understand how consumer behaviour is changing, you become vulnerable to nimble competitors who do. Except now, those competitors aren’t just other brands - they’re content creators, platforms, individuals, and startups.

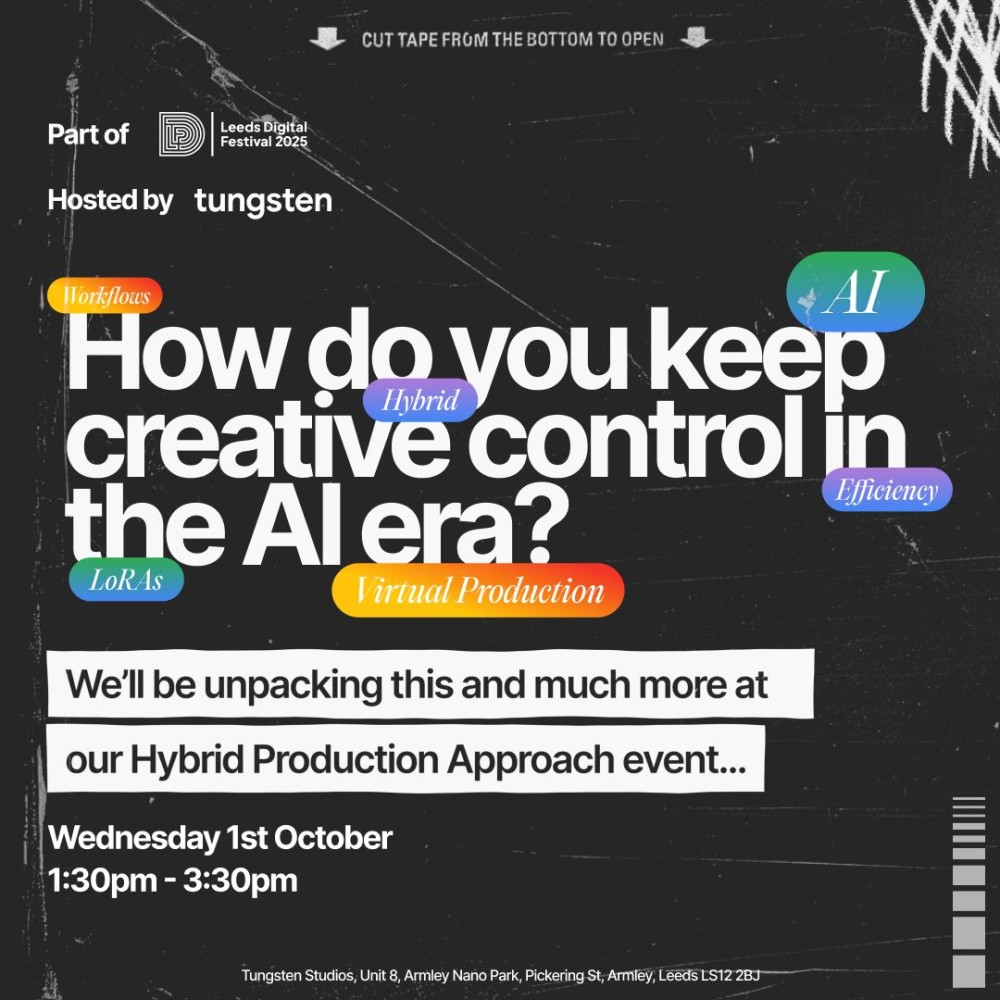

The bigger content picture - hybrid

I stopped thinking about content as “images, videos, CGIs, AIs” years ago. That’s an important distinction in today’s agency landscape.

Under the hood of efficient and intelligent production, they are ALL interchangeable. Autoregressive models aren’t just transforming static images - they’re changing the landscape of content generation together. When image consistency improves, we can increase that in video, CGI, UGC, social, and VFX.

Setting realistic expectations

Before we all get completely swept up in bananarama fever, let’s be honest about what this means practically.

Yes, the technology’s impressive. Yes, it’s a significant leap forward.

But the real value isn’t in the flashy demos or one-off posts about “the death of XXXX” or “blah blah killers” - it’s in how these new approaches solve specific workflow problems we’ve been wrestling with for years. Connecting creatives with better, more stable and scalable options for creating great stuff. That’s it.

The FT reported that targeted AI implementations see 30-40% efficiency gains. Off the back of the MIT report that said 95% of AI projects fail, it bodes further understanding - this is the point of these articles.

- Treat R&D (especially AI R&D) with the rigour and attention that you should

- Build AI foundations that you EXPECT to evolve

- Solve for the future of the commerciality of your business and your clients’ business

For marketeers, agencies, and production companies, this is fundamentally changing how we plan, price, and scope visual projects in the future.

Nano-Banana’s viral moment is really about proving that autoregressive models can deliver on the promise of consistent, controllable image generation - for individuals, not just AI nerds like us. We’re watching the technology mature from experimental to practical in months.

Don’t hem yourselves in, but don’t miss out either. The posts flooding your feed aren’t just social media noise - they’re folks demonstrating capabilities that’ll be standard pipelines within months, not years.