Here we go again

Google announced their Gemini 3 yesterday which means Nano Banana 2 can’t be far away. And everyone’s gonna be banging on about better pixels, higher resolutions, and greater definition on Will Smith’s forehead - but honestly, that’s not gonna be my headline here.

The interesting bit is reasoning capabilities baked into the image generation.

Gemini 3 launched with enhanced reasoning to support code generation, visual coding applications, and native computer vision. And while these are shaking up the entire AR experiential space - there’s another goldmine on the horizon.

What reasoning actually changes

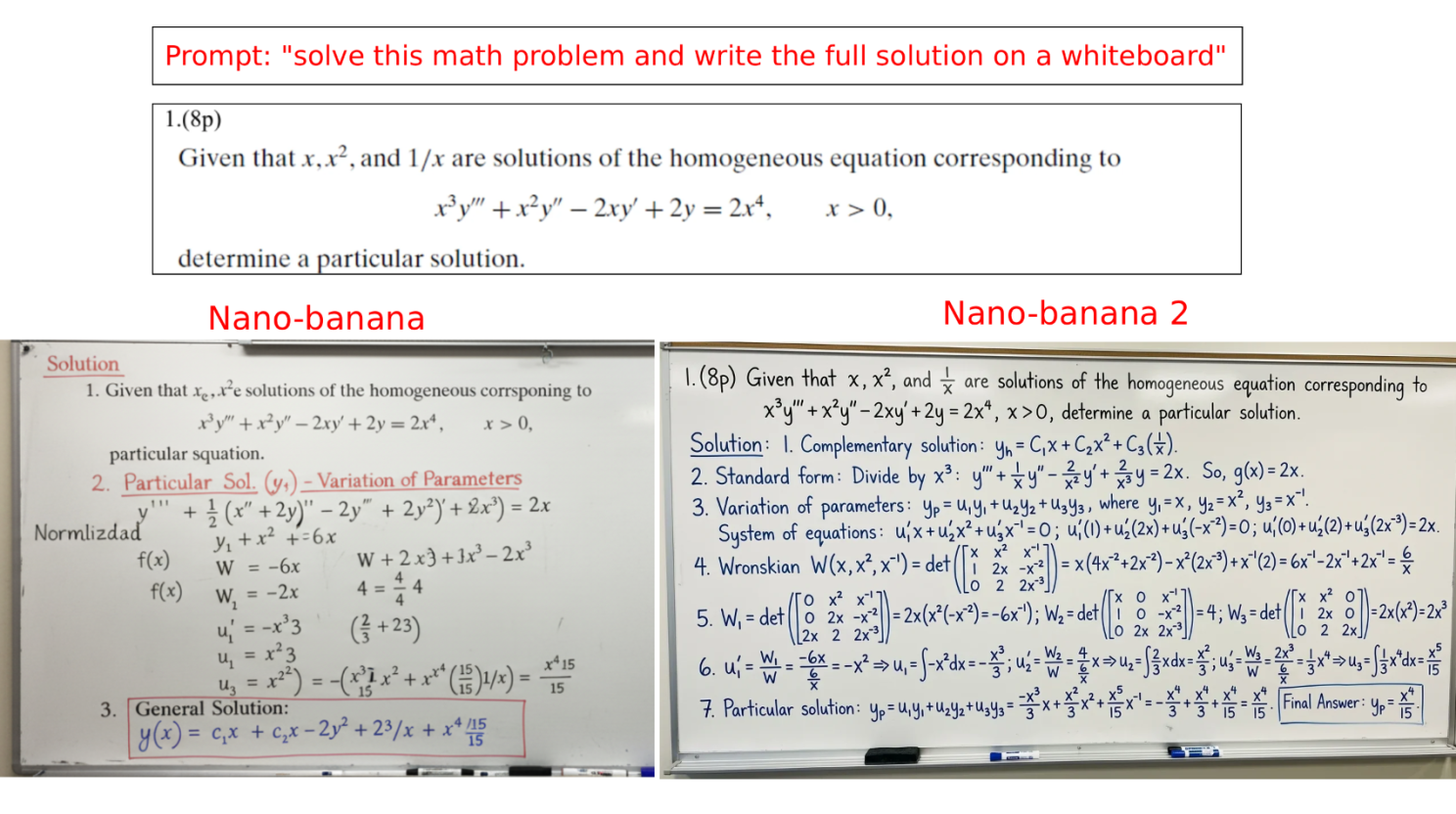

Look, it’s not just about slapping accurate text and maths equations into imagery (though that’s nice). When you bolt a reasoning model into an image model, you’re changing how the generation understands and interprets what you’re asking it to create. Now - let’s unpack that from an operational standpoint:

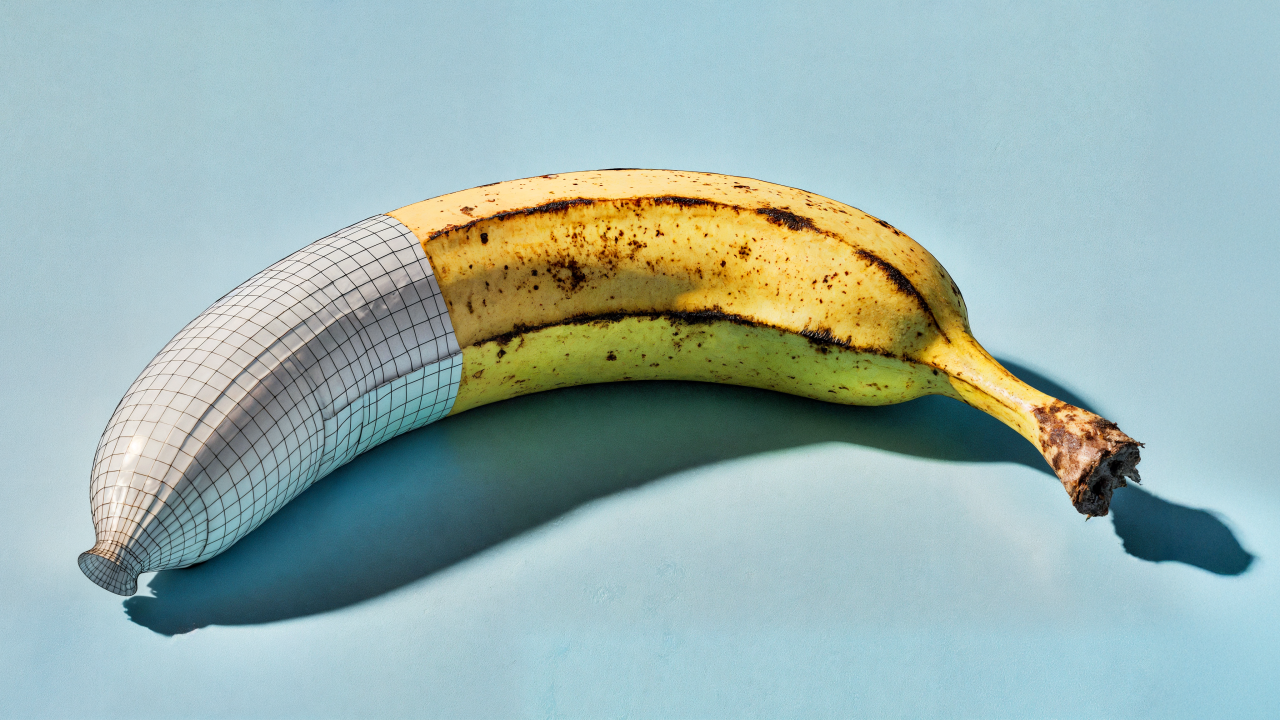

Physics and materials that actually make sense. Reflections, refractions, lighting that behaves properly (or artistically doesn’t!). Early reports show Nano Banana 2 handling complex masking, materials, specular, shadows, and lighting “perfectly”.

Context awareness at scale. When this sits within a million token context window, you’ve got a model that’s maintaining consistency across entire projects, not just single (individual) prompts.

Multi-image reasoning. The model can understand and combine multiple input visuals by generating corrective workflows, understanding relationships between visual elements in ways diffusion models just can’t.

Are we back to the transformer architecture again?

Nano Banana’s built on the same visual architecture I wrote about with GPT-4o. Instead of starting with noise and removing it (like Midjourney or Stable Diffusion), it predicts pixels in stages (planning, review, and self-correcting internally) before final generation, which is more like how it builds text now. This isn’t just technical geekery.

It means the model is using a visual language to assess the context around the generation, and naturally maintaining consistency between generations. Character consistency hitting over 95% accuracy without wrestling with variable control nets or repeated reference context! This is gold dust.

What this unlocks for the creatives

As with all of these releases - the ‘quality’ isn’t just sat in the subjective benchmarks, it’s in what this new feature natively unlocks for the creatives. Let’s have a look:

3D modelling pipelines

Consistent multi-angle views will reduce 3D geometry modelling by up to 40% because you’re working from stable reference points rather than inconsistent concept art. We can factor in consistent modelling construction to reduce any geometry optimisation or retopology after generation.

Product photography datasets

Generating consistent branded product shots across dozens of scenarios without reshooting or extensive retouching. We’re here NOW - but we’re applying lots of (production) context at the moment. Lighting dynamics, material specifications, IOR data.

Real-time and experiential environments

Treating images, drawings, 3D geometry, audio, and video as interchangeable functions. This is something I talk about a lot - but it’s REALLY important! By simplifying the essence of the content to the action, we can represent the generated asset as ANY format (image, video, sonic, 3D, etc.) - this is really cool right?

The LLM orchestra

Google’s now live with Gemini 3, and early testing with Nano Banana 2 shows some real polish from the ‘reasoning-enhanced image generation’ function. But this isn’t about picking one provider and going all-in.

The real ‘operational’ opportunity sits in LLM orchestration - building ‘multi-stage’ workflows where different models handle what they’re best at. No single LLM is optimal for all actions, creations, or tasks - just the same as no creative is proficiently multi-disciplined.

For content production, this means routing tasks through controlled and specialised models rather than forcing everything through one platform. Better performance, more control, and workflows that actually match how experienced production teams work.

If you’d like to see how this orchestrated approach works in content categorisation, classification, and generation - on a local and scalable setting - I’d love to show you.