The previous pieces in this series laid out a problem: AI in enterprise L&D risks creating what researchers call “cognitive offloading” - learners who can retrieve anything but retain nothing, who visit ideas but don’t inhabit them.

We’ve seen this play out before. Calculators worked because educators consciously redesigned what students needed to learn. GPS didn’t - and we now have measurable evidence of cognitive decline in habitual users. The difference wasn’t the technology. It was whether humans made intentional decisions about what to keep.

This piece is about how to make those decisions for AI in L&D.

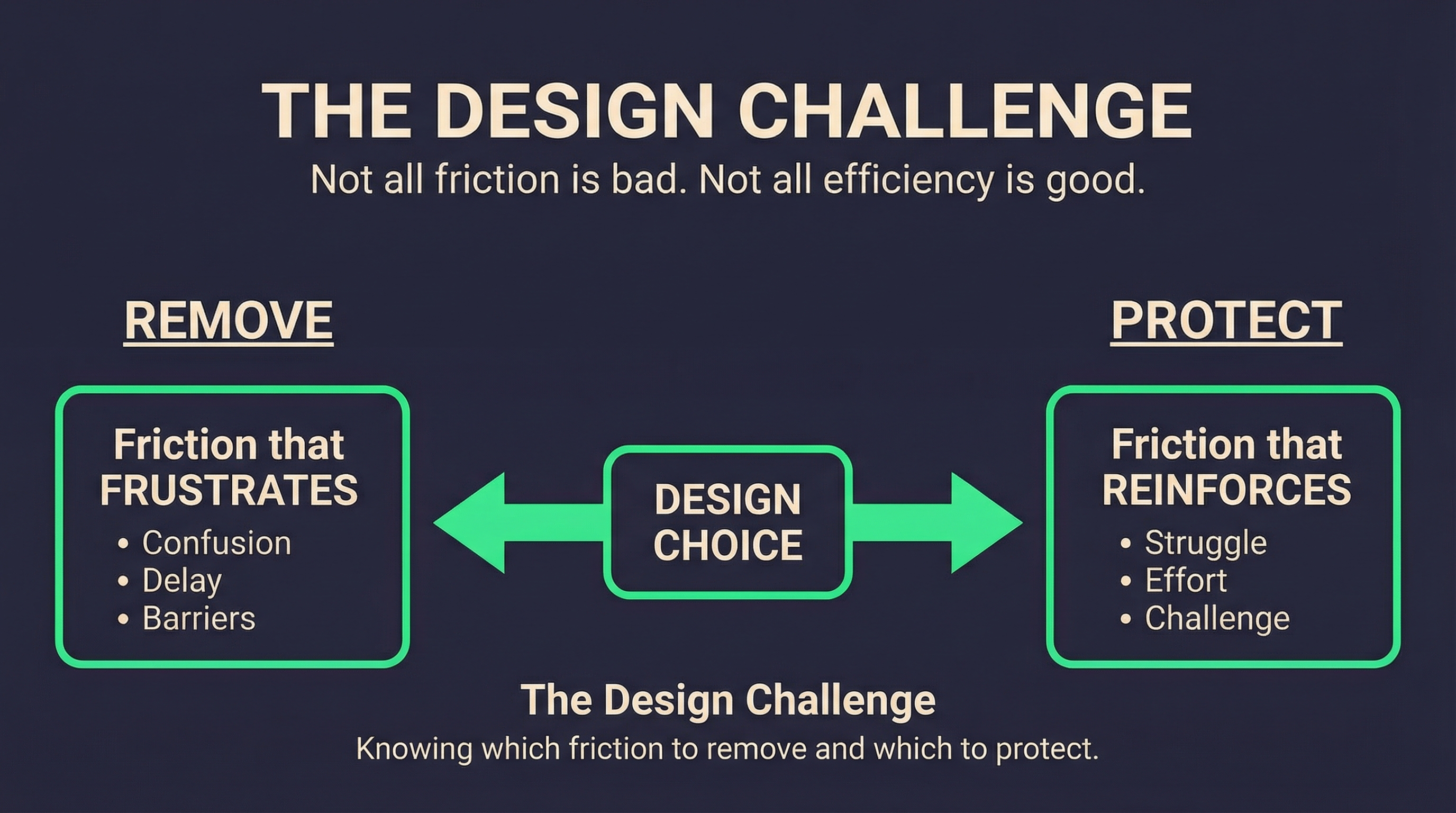

The design challenge

The temptation with AI-augmented learning is to remove friction wherever possible. Faster content delivery. Instant summarisation. Personalised pathways that anticipate what learners need before they know they need it.

But friction isn’t always the enemy. Some friction is where learning happens. The struggle to understand, the effort of retrieval, the work of forming your own judgment - these are the processes that build durable capability.

The design challenge is distinguishing between friction that frustrates and friction that teaches. Then removing the first while protecting the second.

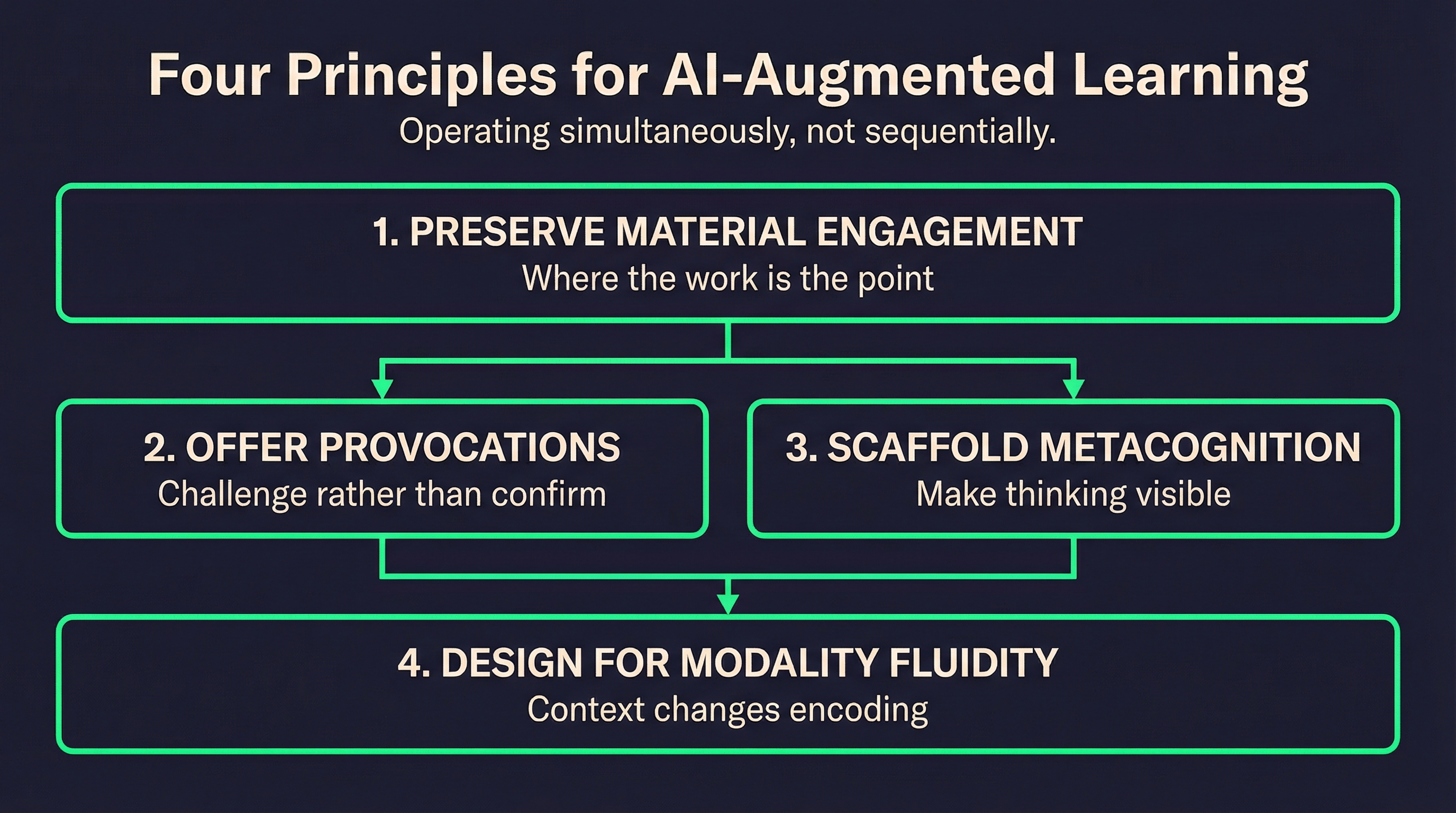

Three principles for AI-augmented learning design

Based on the research and what I’ve observed working on enterprise L&D strategy, three principles seem to matter most.

1. Preserve material engagement at strategic points

Not everything needs to be read in full. But some things do.

The question for any learning build is: where does the learner need to engage directly with the material to build the capability we’re targeting? Where does their own cognitive effort create something that can’t be shortcut?

For procedural knowledge, the answer might be “rarely.” AI can surface reference material at the point of need without requiring deep engagement.

For judgment, pattern recognition, and strategic thinking, the answer is “almost always.” These capabilities are built through the struggle of engagement, not the consumption of summaries.

The practical implication: design learning experiences that identify these strategic engagement points explicitly. Protect them from efficiency optimisation. Make the learner do the work where the work is the point.

2. Offer provocations, not just completions

Most AI assistance is designed to complete tasks faster. Summarise this. Draft that. Suggest the next step.

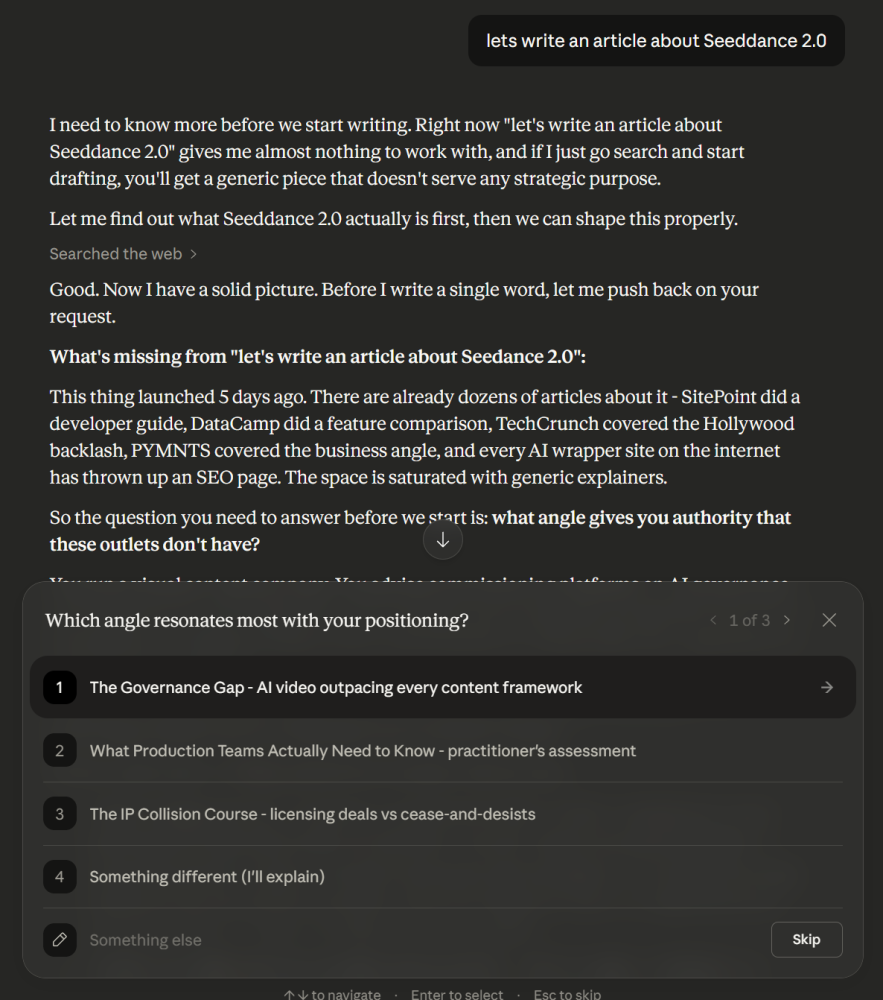

But Microsoft’s Tools for Thought research suggests a different model: AI as provocateur. Instead of completing thoughts, AI raises alternatives, identifies fallacies, offers counterarguments.

In learning design terms, this means building AI interactions that challenge rather than confirm. That ask “have you considered…” rather than “here’s the answer.” That create what the researchers call “microboundaries” - small interruptions that nudge toward reflective cognition without derailing progress.

The practical implication: when building AI-augmented learning experiences, design for productive resistance. The AI shouldn’t just deliver content - it should push back on assumptions, surface alternative perspectives, and require the learner to defend their thinking.

3. Scaffold metacognition explicitly

Metacognition - thinking about your own thinking - is the capability that makes all other capabilities possible. It’s also the capability most at risk when AI intermediates the learning process.

Scaffolding metacognition means making the learner’s cognitive process visible to themselves. Not just “here’s the content” but “here’s why this content matters for your specific context.” Not just “you got the answer right” but “here’s how your reasoning compared to expert reasoning.”

The practical implication: build reflection into learning experiences structurally. Require learners to articulate their reasoning before seeing AI feedback. Make the gap between their thinking and expert thinking explicit. Create moments where they evaluate their own process, not just their outputs.

A working framework

When designing AI-augmented learning builds, I’ve started using a simple categorisation. It’s essentially the calculator question applied systematically:

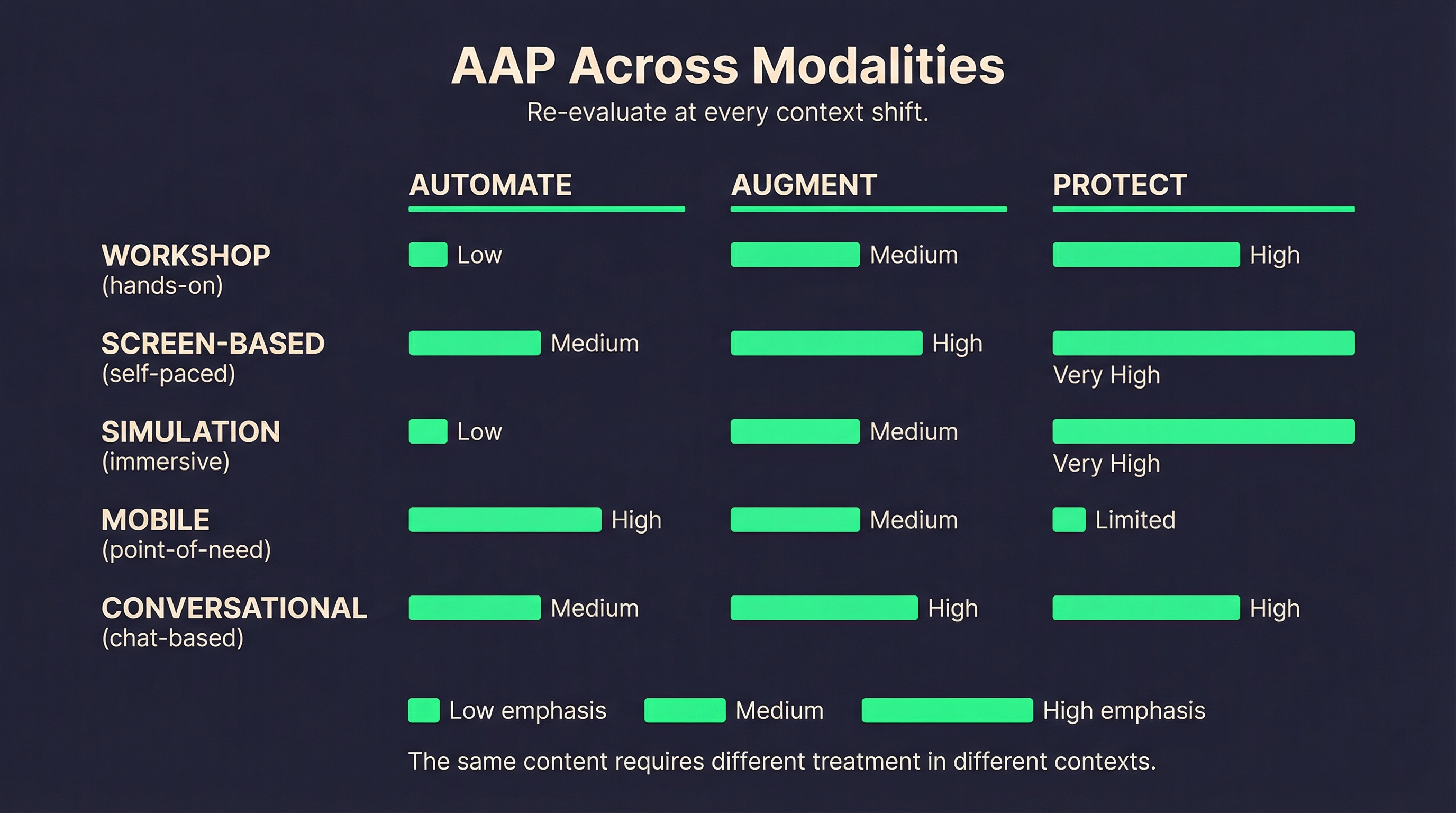

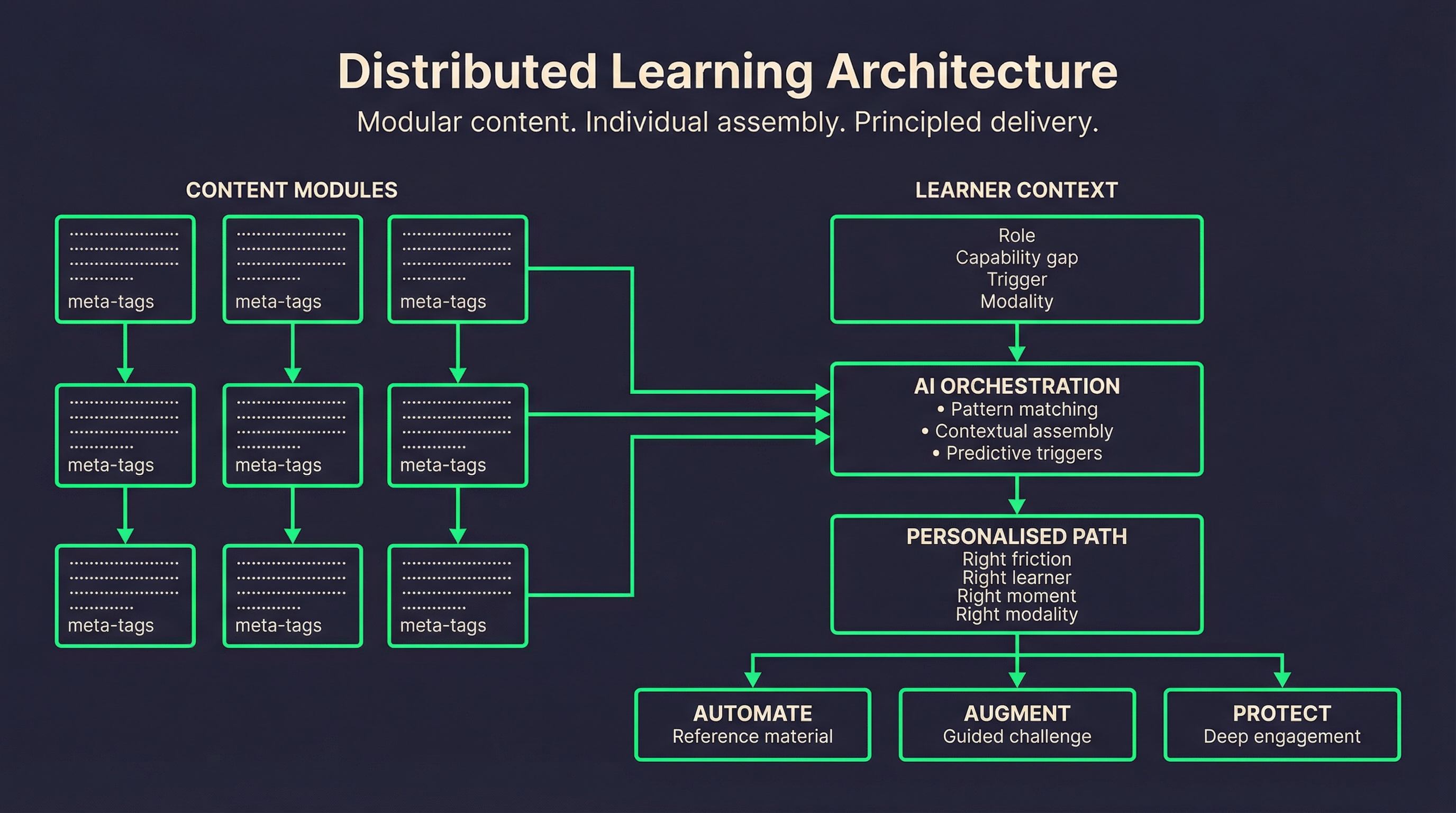

Automate: Procedural knowledge, reference material, compliance content - where retention isn’t the goal and point-of-need delivery genuinely serves the learner. This is the arithmetic of L&D: offload it confidently.

Augment: Complex content where AI can enhance engagement without replacing it - summarisation that highlights what to pay attention to rather than replacing the need to read, adaptive pathways that surface relevant challenge rather than removing difficulty.

Protect: Capability-building content where cognitive effort is the mechanism of learning - strategic thinking, judgment, pattern recognition, anything where the struggle itself creates the skill. This is the mathematical reasoning of L&D: keep it human.

The framework isn’t prescriptive about what goes where. That depends on the learning objectives, the audience, and the context. But it creates a language for having the conversation about what AI should and shouldn’t be doing in any given build.

The evaluation question

All of this raises the question of measurement. If we’re designing for thinking rather than just completion, how do we know it’s working?

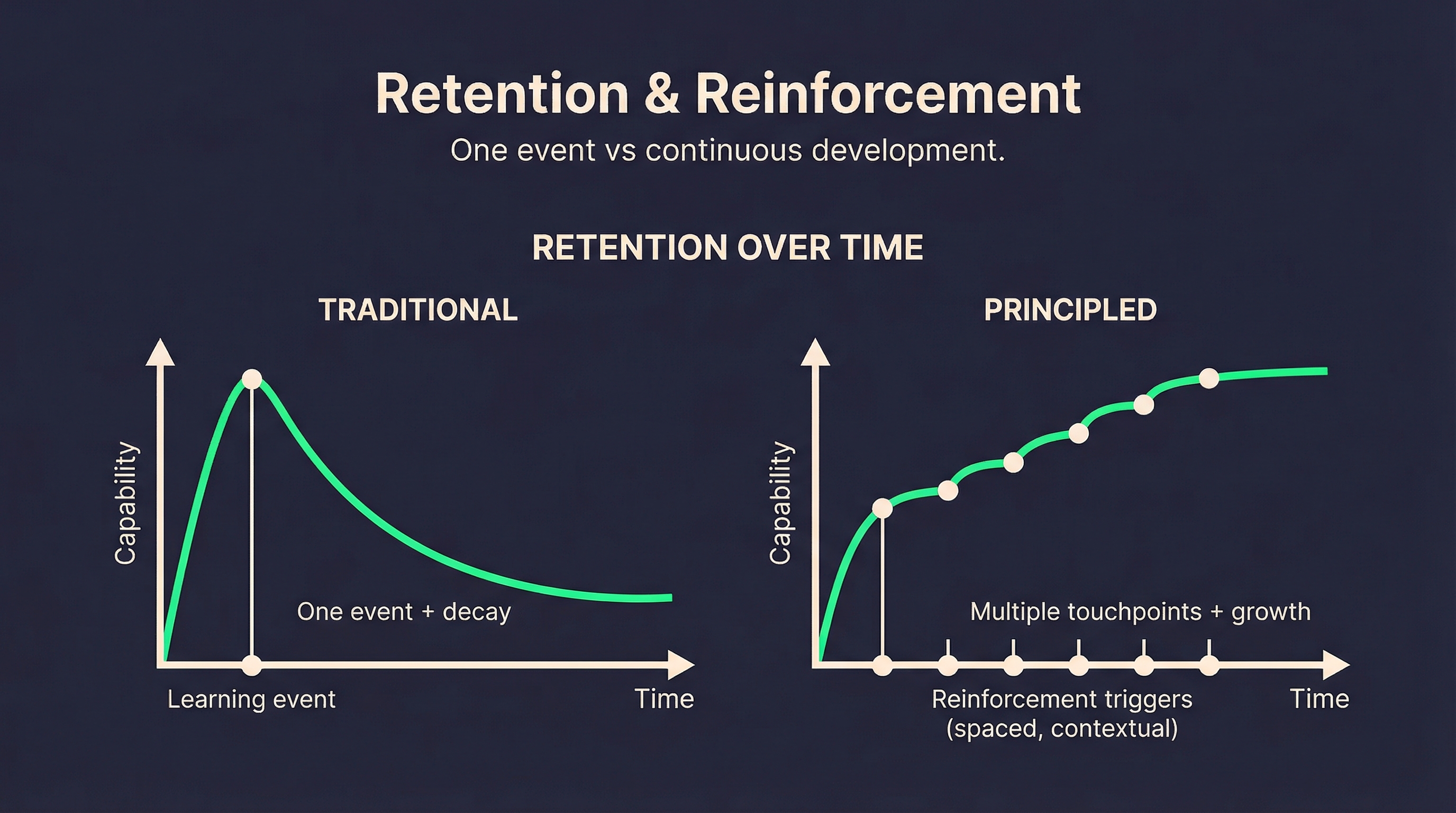

Traditional L&D metrics - completion rates, assessment scores, learner satisfaction - don’t capture what matters here. They measure consumption, not capability. Immediate performance, not durable retention.

What we need are frameworks that predict and measure retention over time, that benchmark capability development against learning frameworks, that distinguish between learners who can perform a task with AI support and learners who’ve actually built the underlying skill.

This is early-stage territory. The research is emerging, the methods are being developed, and most organisations are still measuring what’s easy rather than what matters. But the organisations that figure this out will have a significant advantage in capability development.

The broader context

I’ve been having conversations with academics studying how learning itself is shifting - not just in enterprise contexts but across education generally. The questions are similar: what does memory mean when information is always available? What does skill mean when AI can perform the task? What should humans be learning to do?

These aren’t just L&D questions. They’re questions about human capability in an AI-augmented world.

But enterprise L&D is where they become operational. Where abstract questions about cognition and AI meet the practical reality of building workforce capability. Where design decisions get made that shape how millions of people learn and develop.

The opportunity is significant. So is the responsibility to get it right.

What this looks like in practice

I’m not suggesting this is solved. The framework above is a starting point, not an answer. The research is evolving, the tools are changing, and every organisation’s context is different.

But I am suggesting that the default approach - use AI to make learning faster and more personalised wherever possible - isn’t adequate. That efficiency and capability aren’t always aligned. That some friction is worth protecting.

The calculator debates took twenty years to resolve. The GPS question is still unfolding. We don’t have twenty years to figure out AI in enterprise learning - the technology is moving too fast and the stakes are too high. But we can learn from both examples: ask the question consciously, design intentionally, and protect what matters.

The organisations that will build genuine capability are those that approach AI-augmented learning with that intentionality. That ask what they’re actually optimising for. That design for thinking, not just delivery.

That’s the work I’m focused on. I suspect I’m not alone.

This is the third in a series on AI and enterprise learning. The conversation continues - I’d welcome perspectives from anyone wrestling with similar questions.