The shift you’ve already noticed

If you’ve been involved in content capture over the past three years, you’ll have noticed a shift in what’s being captured.

Gone are the days of the single linear shoot, the short social campaign, or a single packshot request. Even the most staunch traditional production company is realising this. The tier-one specialists are finally developing integrated social, CG, and hybrid content workflows too.

Content suites are the new normal. Idents, formats, versions, personalisation, BAU, social variants, and seasonally agnostic content generation.

So how do content creators keep this up? And what’s next?

The new mandate

Beyond the usual back and forth on budgets, shotlists, and schedules lies a new mandate.

Agencies and production studios are collecting content with well-structured datasets. Why? To train custom branded AI models like LoRAs (Low-Rank Adaptations) that can be used with LLMs to narrow the focus and produce more accurate and more ‘on-brand’ AI generations - and expand on a brand’s unique visual DNA, and extend the life, expectations, or volume of content from the practical shoot.

Just like the VFX Supervisor onset, I often advocate for a Visual Dataset Supervisor to be present in the busy shooting schedule - like a super: DIT + VFX + Creative Strategist + Compositor.

Having implemented this approach across dozens of projects, I’ve seen first-hand how it’s changing the face of production workflows, and creating far greater control in post and BAU content than we could ever have managed from a single shoot.

Why data capture matters now

The content landscape has shifted so much over the past 10 years. According to the McKinsey state of AI study, brands that incorporate custom-trained AI models see up to 20% reduction in campaign supply chain costs, and a 60% reduction in CPA against traditional production methodologies.

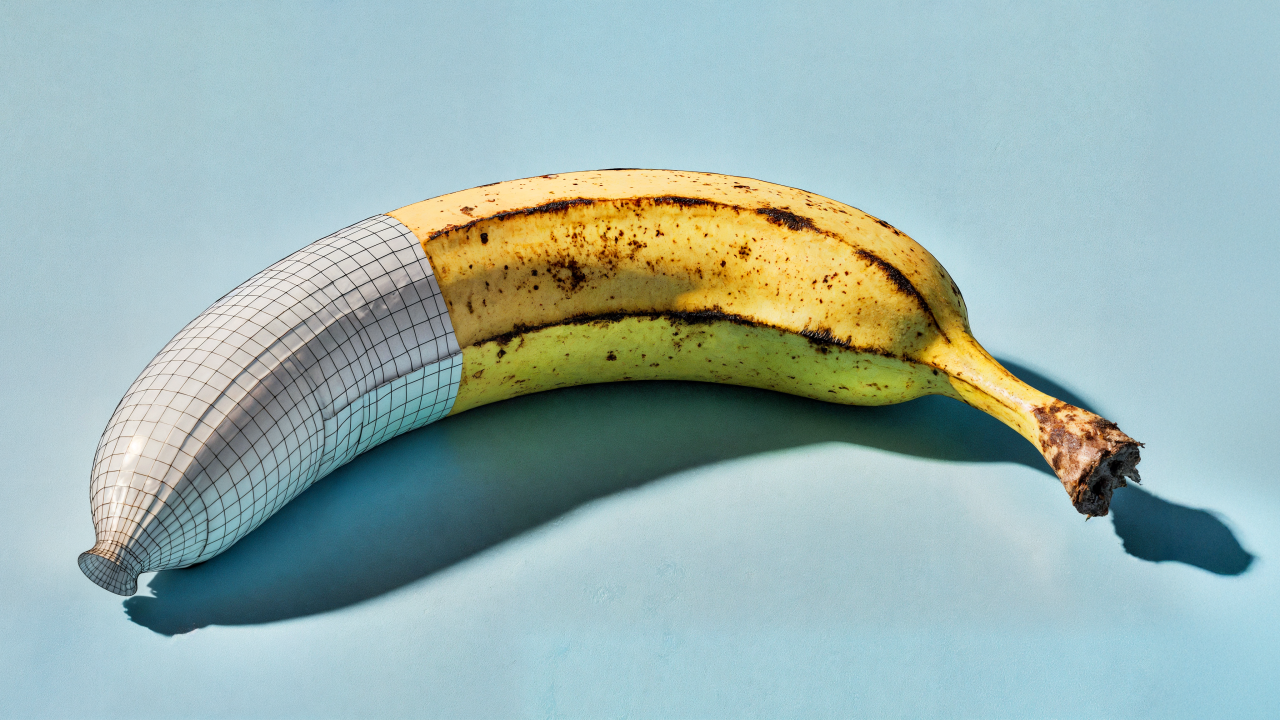

But it’s not that simple. There’s a catch - these datasets are not that simple to create. Digital twins need to be high-quality, consistent, and formed with professionally structured data for an AI to learn from.

For creative teams, this evolution presents some fascinating developments:

- Production shoots now serve dual purposes: creating final content AND real-world training data

- Brand consistency becomes programmatically enforceable across all outputs

- The creative process expands to include “teaching” your brand to an AI

As one Creative Director at a major agency recently told me, “We’re not just making content anymore; we’re building brand intelligence and telling human-led stories.”

Planning your data capture strategy

The success of your branded LoRA depends largely on how well you plan your data collection during production. This isn’t about randomly shooting more footage - it’s about deliberate, structured capture as part of the production schedule.

Working with a consumer retail brand, we integrated AI data collection into their NPD campaign shoot. We allocated just 30 additional minutes with each product to capture controlled variation sequences. The resulting LoRA model now generates on-brand product imagery that’s indistinguishable from their standard production - saving them nearly thousands per NPD on supplemental ecommerce and social content capture.

To implement this on your productions:

- Identify your brand’s key visual elements (lighting style, composition patterns, colour palettes)

- Create a shotlist specifically for AI training data that isolates these elements

- Allocate dedicated time in your production schedule for “data capture” sessions

- Ensure consistent metadata tagging during the shoot

“What’s revolutionising our workflow isn’t replacing creativity with AI - it’s how we’re feeding our creativity into these models, and using the data after the shoot day!”

Technical specifications for effective data capture

When shooting for LoRA training data, technical precision matters significantly more than when shooting purely for creative editing.

A retail support brand was struggling with inconsistent branded product imagery across global markets. We established a standardised data capture protocol during their main campaign shoot. By controlling variables and systematically capturing variations, we created a product-specific LoRA that now ensures perfect brand consistency, even when generated images are created by different regional teams.

To maximise your data quality:

- Use consistent lighting setups with minimal variation (unless lighting variation is specifically what you’re training)

- Maintain fixed camera positions with controlled, incremental movements

- Capture clean background plates separately

- Shoot LiDAR and photogrammetry at the same time

- Include colour and specular calibration cards in setup shots

“The garbage-in-garbage-out principle is magnified with LoRAs. Every inconsistency in your training data becomes a permanent feature of your model.”

Efficient capture workflows that don’t break the bank

One concern I constantly hear is: “Won’t this dramatically increase our production costs?”

I mean, it’s not free. But the fact of the matter is, it’s orders of magnitude cheaper than running individual shoots time and time again.

The key is integration, not addition. Modern productions can capture training data alongside primary content creation with minimal overhead.

Maintaining the human touch

Let’s address the elephant in the room - many creatives fear that AI will diminish the art of production. In reality, when implemented properly, the opposite happens.

It gives us a wider gamut of creative freedom after the hectic shoot window, that’s all.

By automating the repetitive aspects of production, we can expand the lifespan of a content capture period with well-trained models.

Next steps

I encourage you to start small - identify one upcoming production where you can implement a simple data capture protocol. Focus on consistency and quality rather than quantity for your first attempt.

Remember, you’re not just shooting content; you’re teaching your brand to an AI.